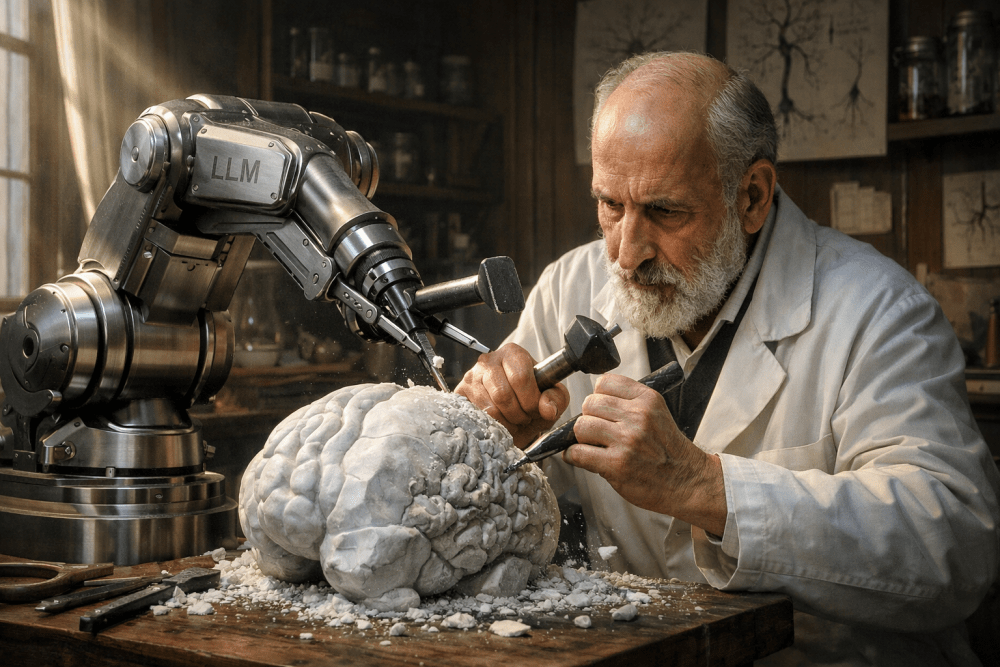

We can all be sculptors of our own brains, if we want to be

The emergence of large language models (LLMs) and their use in our everyday work is silently but profoundly changing the ways in which we think, learn, and make decisions. These effects are especially visible in the field of software engineering. For the first time, we have tools available that can not only autocomplete the names of variables and methods, they can also propose architectures, write tests, translate between programming languages, and suggest solutions with a fluency that can rival human expertise.

Although researchers are only just beginning to gather empirical evidence regarding productivity, their results are already suggesting that measurable improvements exist for specific tasks. In a controlled experiment using GitHub Copilot, a group of developers were able to complete an implementation task significantly faster than a control group [1]. Increased productivity has also been observed for professional code writers when an assistant like ChatGPT is being used, along with converging levels of quality [2]. In addition, industry leaders have recently been making some remarkable statements. When presenting his company’s Q4 results for 2025, the co-CEO of Spotify, Gustav Söderström, said that their developers “have not written even a single line of code since December”. Instead, they are just providing guidance for generative AI tools [3 in Spanish]. Boris Cherny, head of Claude Code, has also stated that “during the last two months, 100% of its code has been written entirely by Opus 4.5” [4]. Even Sam Altman has suggested that GPT-5 Codex has been an active part of its own development, by programming itself [5].

However, this paradigm shift also brings with it an uncomfortable question, which has inspired this third post from the series on LLMs: what happens to our skills that we are no longer using when we “delegate” some of our cognitive effort?

Discussions in the field of education have become polarized, with many focused on cheating and others on new opportunities. There are also studies that have described the ways in which widespread access to generative AI tools can modify our behavior (and sometimes our perceptions) with regard to academic integrity. In particular, data collected prior to and subsequent to the explosive popularity of ChatGPT have been analyzed, to explore patterns of stability or change in relation to copying and reuse of other people’s work among high school students [6]. Outside the classroom, some authors have started to express concerns about how these tools are reconfiguring some of our previously existing habits: if a text “created” is seen as sufficiently correct, we may feel a real temptation to not bother revising it, and its contents may not become integrated into our own mental framework.

At this point, it is worth introducing a distinction: using an LLM as a calculator (as a way to outsource some mechanical calculation) is not the same as using it as a substitute for reasoning (to outsource construction of our own mental model). Some recent studies have begun to suggest that there are risks associated with this type of behavior, with connections observed between higher levels of dependence on AI and lower levels of critical thinking, with some plausible mechanisms being proposed (such as cognitive fatigue), while also recognizing that information literacy can be a (partially) moderating factor [7]. In addition, there are studies regarding how we learn, where the use of an LLM has been compared against the use of a traditional search engine. In this case, researchers have reported lower levels of mental effort, along with a reduction in the depth of reasoning applied. This suggests that the “ease” of using these solutions can have a negative impact on learning, unless active strategies are applied to compensate for these effects [8].

In software engineering, one way to interpret this “cognitive atrophy” [9] is as a poorly calibrated form of offloading: when software is being developed, steps that were previously seen as an important part of the training deliberately used to expand creative and analytical skills are now being transferred to the assistant (for example, looking for coding errors, debugging, designing abstract solutions, or selecting a data structure), to the point where the tasks that remain for us are primarily those involving supervision and correction. Paradoxically, this type of supervision is far from a trivial task.

Earlier research on the subject of automation has already warned us that factors such as trust, cognitive load, and risk perception will help determine whether we use, misuse, or abuse automated systems, and these are the same factors that may also push us towards excessive dependence, and towards underutilization of our own mental capabilities [10]. Other recent experiments have led to observations that simply being aware of the fact that a suggestion has come from AI can increase a user’s willingness to follow that suggestion, even if it contradicts the contextual information available and the user’s own judgment [11]. When we think about this in the context of coding, it translates into a tendency to accept plausible suggestions, even if they are flawed. This can have costs that are only recognized later: overlooked errors, technical debt, and most importantly, learning that never properly crystallizes, because our thought process is interrupted before it becomes ingrained.

For younger workers, this risk can be twofold, because emerging engineers may be faced with a dichotomy that we could refer to as Dr. JekyLLM and Mr. Hyde: will they be able to use AI as a tool for learning, or will it represent a dangerous temptation that robs them of their opportunity to learn from their mistakes, at an early stage where those mistakes are still manageable? On one hand, LLMs can speed up delivery while providing a false sense of competence (simply because the code generated allows the system to “work”), while reducing the amount of time that we used to invest in figuring out why it works. On the other hand, when organizations focus their priorities only on throughput, it is easy for the focus of the training they provide to change as well, as it moves away from gaining understanding through guided exploration towards merely supervised execution.

In relation to this, usability studies are especially revealing. In tests given to recently graduated engineers, sources of friction begin to emerge (for example, uncertainties about what to accept), along with misunderstandings (about what the AI model can actually guarantee) and strategies for compensation (such as after-the-fact revisions and quick patching). What this means is that simply learning how to write prompts is not enough: young engineers need to learn how to incorporate the use of LLMs without using them as a substitute for proper reasoning [12]. At the same time, comparisons of quality are producing warnings that there may be substantial variability in the correctness, maintainability, and security of code generated with LLMs: the output may appear valid, but at the same time, it may be far from optimal, with significant shortcomings [13].

The point I want to make in this article is that this scenario is not inevitable, and we could also be overlooking an extraordinary opportunity. If we are willing to dedicate some effort in the right direction, this same LLM technology can become a sort of cognitive scaffolding, rather than a cognitive replacement. In other words, it can serve as a tool that in the words of Santiago Ramón y Cajal, will let us “become sculptors of our own brains”, enhancing our ability to learn at a scale that was hard to imagine in the past.

To achieve this, the first tip (or trick) is to explicitly separate two modes of working, which can in fact complement each other: quick delivery mode and learning mode. In quick delivery mode, the aim is to make progress, but with strict quality controls applied (e.g., testing, static code analysis, and peer review). In learning mode, the goal is still to make progress and apply those controls, but without overlooking the need to strengthen the mental model, such as by asking for alternative solutions and examining their pros and cons; requesting explanations with invariants and complexity; asking the assistant to derive limiting cases and explain the weaknesses of more “naive” solutions; or forcing a “second round”, where the engineer rewrites the solution without looking at the prior results.

This modified approach is consistent with the results of research that has warned of “metacognitive laziness”: even if a tool can improve our final product, it might not be improving our understanding and knowledge, unless a certain degree of self-regulation, reflection, and personal effort is also applied [14]. Also in line with some critical reviews recently published on the subject of AI and learning, it seems as though what really matters here is the specific process that allows us to sculpt ourselves through practice, by putting our focus less on the tool itself and more on how the task is designed, and on the learning process: which cognitive processes are being activated, what type of attention is being stimulated, and what kinds of habits are being solidified [15].

These are strategies that can be put into practice as simple rituals that are compatible with the corporate environment, and with teams that include younger members. For example:

- Use active comparison: engineers can ask an LLM to provide a solution, and then before accepting it, they can write their own solution (even a partial one) and make a comparison.

- Perform a brief post-mortem analysis: engineers can take notes, using their own words, about what was learned (a standard, an API, or a conceptual error) and what types of indicators made it possible for them to detect a problem.

- Deliberately impose restrictions: establish “copilot free zones” (e.g., for code katas, training modules, or revisions), so that younger engineers can gain experience without using assistance.

- Create prompts focused on personalized tutoring: “don’t give me the final code; ask me questions, suggest steps, and validate my own ideas.”

- Use verification as a source of learning: make revision a more intensive intellectual activity (with an emphasis on aspects such as properties, invariants, or stress testing), rather than simply asking “does it pass or fail the test?”

When this type of approach is applied, the LLM is no longer being used as a shortcut that lets us “turn off our brain”. Instead, it can serve as a multiplier of our curiosity and our possibilities for professional growth, like a sort of intellectual sparring partner, which has certain limitations but also 24/7 availability, and with the engineer still responsible for the thinking applied.

Ultimately, the key elements here are deliberate practice and continuous learning, so that in line with the quotation attributed to Benjamin Franklin, we can keep our skills “sharp”. Part of my own work involves making AI evolve, so that it can be a useful and responsible tool in areas where the standards of quality are very high. In this article, I have tried to share what I have learned, so that anyone (including engineers who are just starting out as well as those who have already spent decades in the industry) can reflect upon these ideas and share their own experience. If the posts in this series have given you a new way of looking at AI, a bit more calmly even in an atmosphere saturated with hype, then they have served their purpose.

Author: David Miraut

References:

[1] S. Peng, E. Kalliamvakou, P. Cihon, and M. Demirer, “The Impact of AI on Developer Productivity: Evidence from GitHub Copilot”, Feb. 13, 2023, arXiv: arXiv:2302.06590. doi: 10.48550/arXiv.2302.06590.

[2] S. Noy and W. Zhang, “Experimental evidence on the productivity effects of generative artificial intelligence”, Science, vol. 381, no. 6654, pp. 187–192, Jul. 2023, doi: 10.1126/science.adh2586.

[3] “Spotify asegura que sus programadores no han escrito ‘ni una sola línea de código’ en 2026 y eso dice mucho del futuro que nos aguarda” (“Spotify says that its programmers have not written ‘even a single line of code’ in 2026, and this tells us a lot about the future ahead of us”). Viewed on: Feb. 24, 2026. [Online]. Available at: https://www.elconfidencial.com/tecnologia/2026-02-14/programadores-spotify-no-escriben-codigo-1qrt_4302850/?utm_source=chatgpt.com

[4] M. Zeff, “How Claude Code Is Reshaping Software–and Anthropic”, WIRED. Viewed on: Feb. 24, 2026. [Online]. Available at: https://www.wired.com/story/claude-code-success-anthropic-business-model/

[5] B. Edwards, “How OpenAI is using GPT-5 Codex to improve the AI tool itself”, Ars Technica. Viewed on: Feb. 24, 2026. [Online]. Available at: https://arstechnica.com/ai/2025/12/how-openai-is-using-gpt-5-codex-to-improve-the-ai-tool-itself/

[6] V. R. Lee, D. Pope, S. Miles, and R. C. Zárate, “Cheating in the age of generative AI: A high school survey study of cheating behaviors before and after the release of ChatGPT”, Comput. Educ. Artif. Intell., vol. 7, p. 100253, Dec. 2024, doi: 10.1016/j.caeai.2024.100253.

[7] J. Tian and R. Zhang, “Learners’ AI dependence and critical thinking: The psychological mechanism of fatigue and the social buffering role of AI literacy”, Acta Psychol. (Amst.), vol. 260, p. 105725, Oct. 2025, doi: 10.1016/j.actpsy.2025.105725.

[8] M. Stadler, M. Bannert, and M. Sailer, “Cognitive ease at a cost: LLMs reduce mental effort but compromise depth in student scientific inquiry”, Comput. Hum. Behav., vol. 160, p. 108386, Nov. 2024, doi: 10.1016/j.chb.2024.108386.

[9] N. Kosmyna et al., “Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task”, Dec. 31, 2025, arXiv: arXiv:2506.08872. doi: 10.48550/arXiv.2506.08872.

[10] R. Parasuraman and V. Riley, “Humans and Automation: Use, Misuse, Disuse, Abuse”, Hum. Factors, vol. 39, no. 2, pp. 230–253, Jun. 1997, doi: 10.1518/001872097778543886.

[11] A. Klingbeil, C. Grützner, and P. Schreck, “Trust and reliance on AI – An experimental study on the extent and costs of overreliance on AI”, Comput. Hum. Behav., vol. 160, p. 108352, Nov. 2024, doi: 10.1016/j.chb.2024.108352.

[12] P. Vaithilingam, T. Zhang, and E. L. Glassman, “Expectation vs. Experience: Evaluating the Usability of Code Generation Tools Powered by Large Language Models”, in Extended Abstracts of the 2022 CHI Conference on Human Factors in Computing Systems, in CHI EA ’22. New York, NY, USA: Association for Computing Machinery, Apr. 2022, pp. 1–7. doi: 10.1145/3491101.3519665.

[13] B. Yetiştiren, I. Özsoy, M. Ayerdem, and E. Tüzün, “Evaluating the Code Quality of AI-Assisted Code Generation Tools: An Empirical Study on GitHub Copilot, Amazon CodeWhisperer, and ChatGPT”, Oct. 22, 2023, arXiv: arXiv:2304.10778. doi: 10.48550/arXiv.2304.10778.

[14] Y. Fan et al., “Beware of metacognitive laziness: Effects of generative artificial intelligence on learning motivation, processes, and performance”, Br. J. Educ. Technol., vol. 56, no. 2, pp. 489–530, 2025, doi: 10.1111/bjet.13544.

[15] E. Bauer, S. Greiff, A. C. Graesser, K. Scheiter, and M. Sailer, “Looking Beyond the Hype: Understanding the Effects of AI on Learning”, Educ. Psychol. Rev., vol. 37, no. 2, p. 45, Apr. 2025, doi: 10.1007/s10648-025-10020-8.